Opportunistic Assessment of Bone Density in the Cervical Spine Using Dental Cone Beam Computed Tomography

I've been researching the use of dental Cone Beam Computed Tomography (CBCT) for opportunistic bone density assessment for the past three years at the University of Pennsylvania under Dr. Chamith Rajapakse. The study looks into whether routine dental CBCT scans - more widely available and less expensive and radiation-intensive than conventional CT, can be used to find early signs of osteoporosis.

In order to facilitate cross-anatomical validation, we also assess tooth density and femur CT scans in addition to our primary analysis of radiographic density patterns in the C3 cervical vertebra.

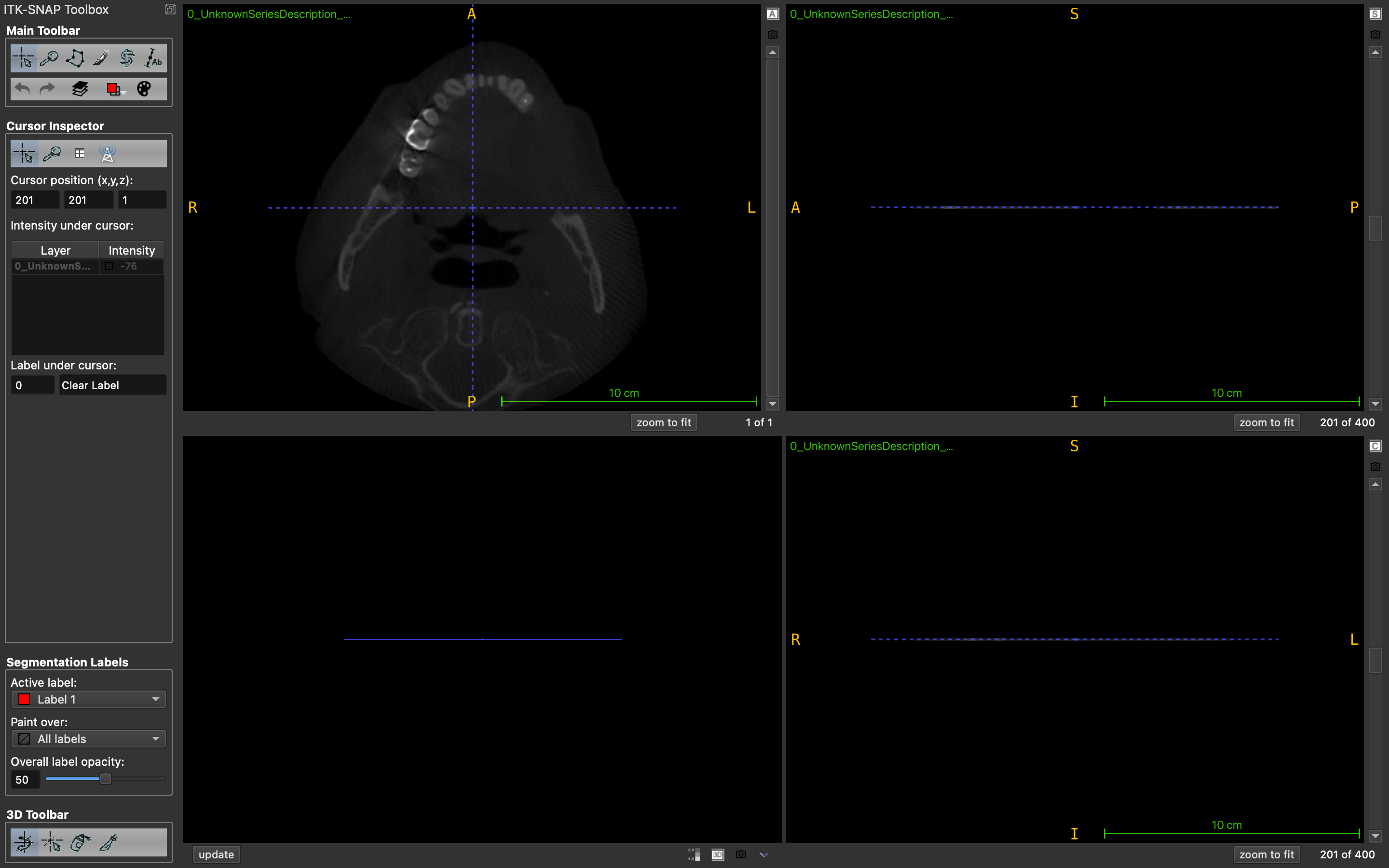

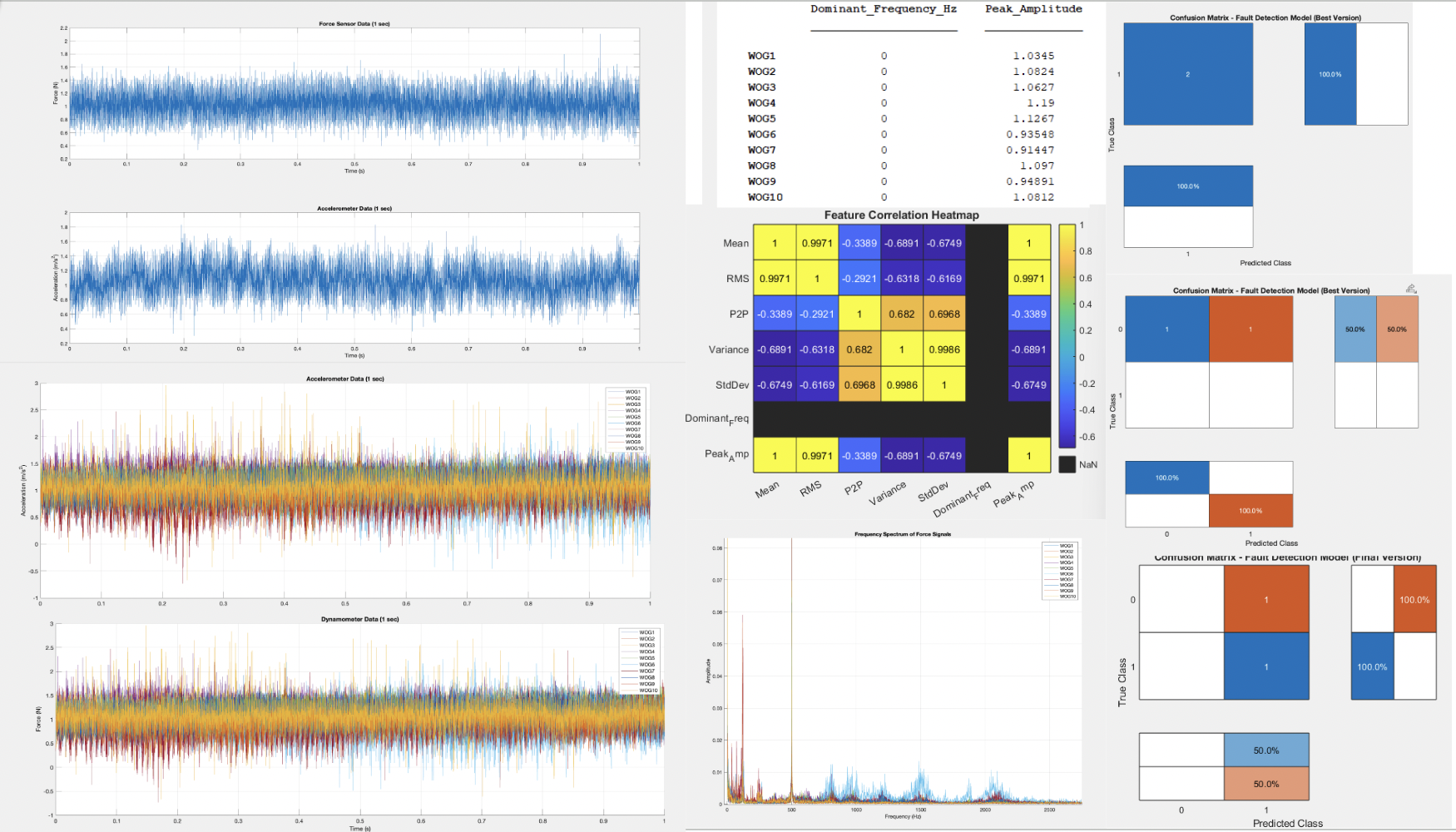

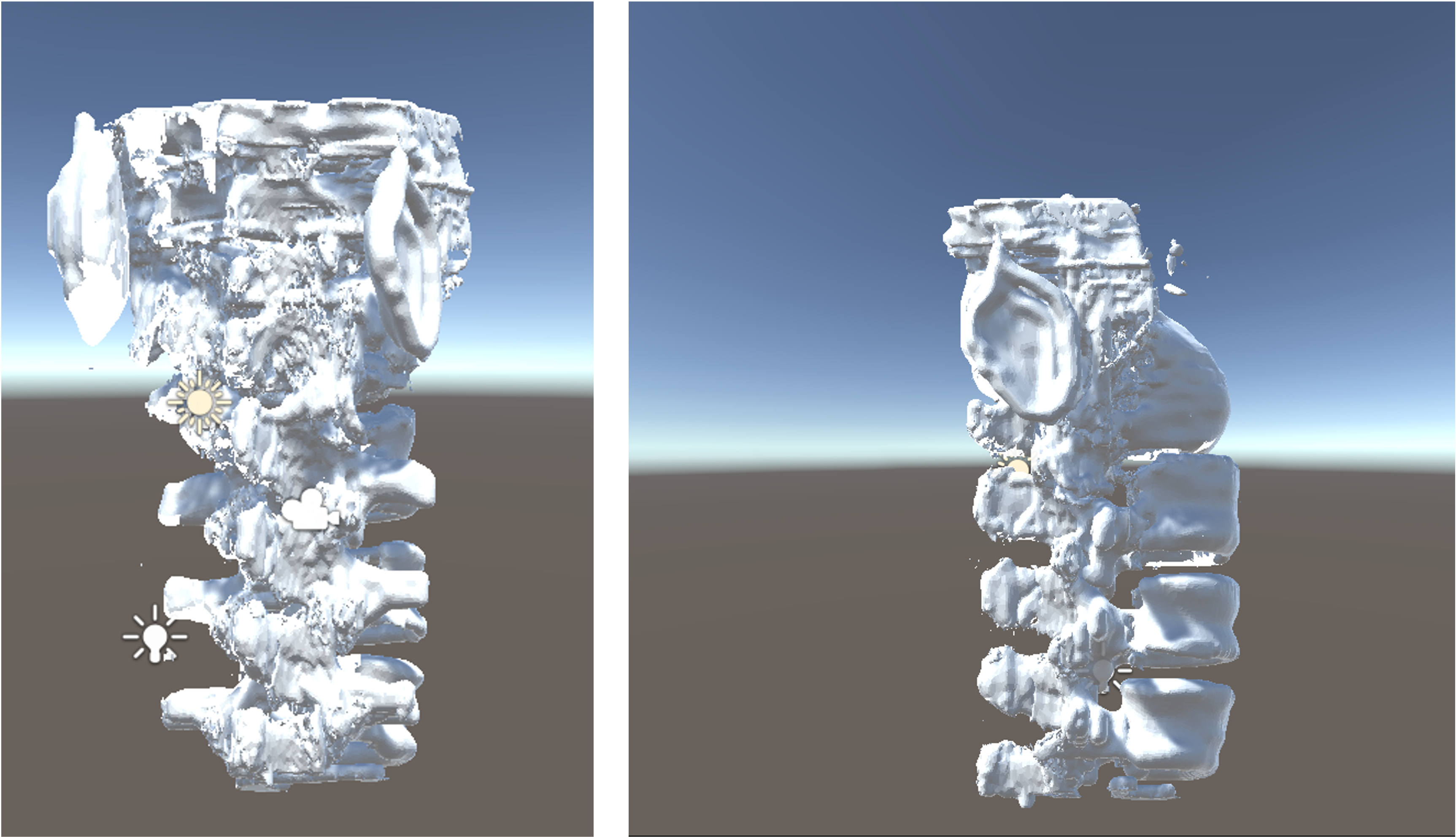

We extracted voxel-level intensity features from more than 1,000 patient scans, calibrated density values across imaging modalities, and compared metrics derived from CBCT with the clinical gold standard, DXA-based T-scores.

I found strong connections between CBCT intensity patterns and DXA measurements in preliminary results, suggesting that CBCT could serve as an accessible, opportunistic screening method—especially for patients who regularly undergo dental imaging but may not receive dedicated bone density evaluations.

This research has broader clinical implications: if dental CBCT scans can reliably flag low bone density, dentists and oral surgeons could play a critical role in identifying at-risk individuals earlier, enabling timely medical follow-up.

Our ongoing work focuses on refining segmentation methods, normalizing scan data for cross-machine compatibility, and developing machine-learning models to automate risk classification with greater precision.

Download My Paper (PDF)